Designing a Process for Technical Innovation in Engineering Teams

A structured approach fostering technical innovation within your engineering team, balancing autonomy with standardization. Dive into each step, from problem identification to full implementation.

In last week's post, we looked at some key considerations to have in mind when you decide to implement an internal process for coordinating technical innovation.

As a reminder, this whole discussion stems from the need to balance autonomy and standardization in any engineering team.

If you want to refresh your memory on that broader discussion, have a look at this other article as well:

As promised at the end of last week's post, we'll focus today on designing a process for your team.

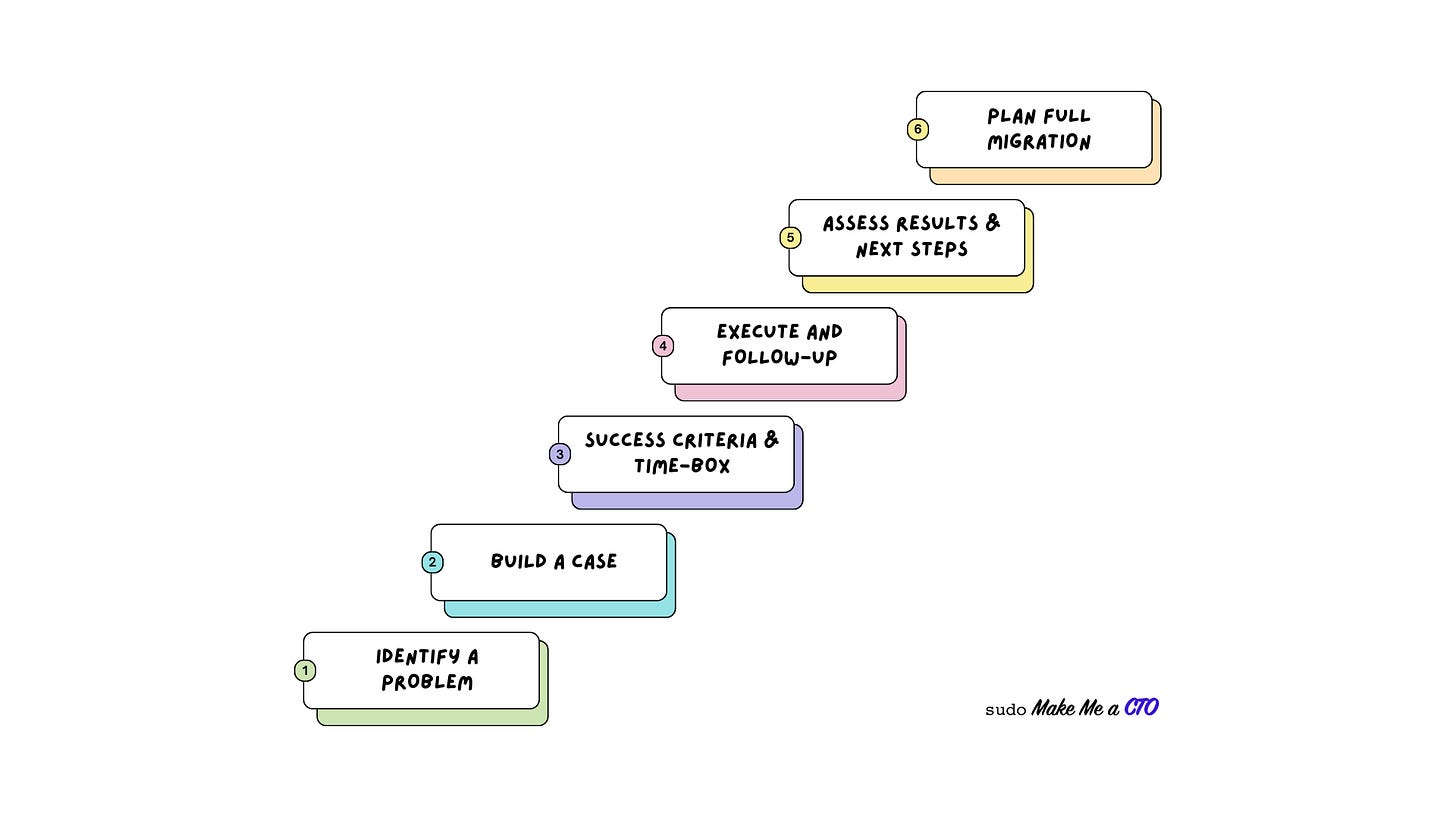

This process is structured around the following steps:

Step 1: Identify a clear problem, pain, or opportunity

Step 2: Build a case for testing one or more alternative solutions

Step 3: Set success criteria and time-box the discovery phase

Step 4: Execute and follow up frequently

Step 5: Assess the result of the experiment, and define the next steps

Step 6: Plan the required time to migrate to the new solution

Let's look at them in detail.

🔬Step 1: Identify a clear problem, pain, or opportunity

You want your team to be very explicit and clear about the problem they intend to solve through technical innovation.

You might feel tempted to give the team some space to play with new tech to “keep them motivated”, but that would be merely scratching the surface of the problem. Not only its impact on motivation will be limited, but it will likely increase the overall complexity and technical debt the team will have to work with. Which in turn will lead to further frustration.

A lot of time and energy is wasted just following the lures of the latest language, framework, or - these days - AI tool.

As a leader, you're responsible for getting the best value out of your team's work. This means the threshold should be high for you to add “one more thing” to the long list of items already on the team's table.

Be very wary of two common pitfalls when launching tech improvement initiatives.

Disregarding Opportunity Cost. everything your team does comes at the cost of not doing something else. So make sure the problem your team wants to work on is high enough on the list of problems your company is facing.

Local Optimization. The Theory of Constraints teaches us that optimizing the performance of a non-bottleneck part of a system does not improve the performance of the overall system. Due to how organizations are set up, often teams focus on local optimization and can lose the perspective of the main bottlenecks in the overall system.

Start by listing problems your team is facing and that would require some form of technical innovation.

Identify those that are real bottlenecks and pick the one that according to data and observation seems to have the biggest impact.

This first step alone will help you weed out most of the tempting shiny-object-syndrome-driven curveballs you and other team members might be throwing yourself.

📑 Step 2: Build a case for testing one or more alternative solutions

Now that you have identified the problem that needs to be mitigated or solved via a new technological approach, it's time to build a case for it.

You are looking for thoroughness and intellectual discipline, not processes and bureaucracy.

As part of the case, you should ideally identify a few different approaches you will evaluate to solve the problem. This will not only allow you to benchmark different solutions against each other. It will also help filter more cases of “a new framework promising wonders just came out, we need to adopt it” approaches. By forcing yourself and the team to objectively look at a few different options, you'll shift the focus away from the solution in search of a problem and direct it instead on finding the best fit for the issue at hand.

Building such a case should not require lots of bureaucratic and tedious work.

At this stage you should have the following elements clear:

The problem you want to solve, and why do you think this is a bottleneck for your organization

A list of potential approaches or solutions you believe could address the problem

For each proposed solution, a rationale for why you believe it should be considered a viable option.

Just put together a document that covers points 1-3 to begin with. You can always add more sophistication if needed in the future.

You might be tempted now to just go ahead and start the implementation. Hold your horses!

An important step is required before you jump into execution, and it has to do with setting expectations and boundaries.

⏳ Step 3: Set success criteria and time-box the discovery phase

Before you start the implementation, you will want to proactively protect yourself against two beasts that might get in the way of a successful evaluation: Confirmation Bias and Sunk Cost Fallacy.

Let's look at their definitions briefly.

👹 Confirmation Bias

People’s tendency to process information by looking for, or interpreting, information that is consistent with their existing beliefs.

Source: Britannica.com

👻 Sunk Cost Fallacy

Sunk costs often influence people's decisions, with people believing that investments (i.e., sunk costs) justify further expenditures. People demonstrate "a greater tendency to continue an endeavor once an investment in money, effort, or time has been made". This is the sunk cost fallacy, and such behavior may be described as "throwing good money after bad".

Source: Wikipedia

These are both common manifestations of human struggles to be rational and objective once they are involved with a decision that has emotional implications.

Whenever you or someone in your team becomes the main sponsor for a technical initiative, there will be a tendency towards confirmation bias as a way to confirm that the decision was a good one. There will also be a tendency to be influenced by the sunk cost that went into a certain investment as a reason to justify further investments.

The way you protect yourself from both threats is by deploying two mechanisms: agreed-upon success criteria, and time-boxing the first implementation.

By setting up-front success criteria you equip yourself and the team with an objective way to evaluate the success of an initiative in such a way that will make confirmation bias something you can poke fun at with colleagues in meetings. These criteria should allow anyone not directly involved with the project to look at a set of objective measurements and assess whether or not the stated goals have been achieved.

These measurements should ideally be quantitative metrics, but depending on the issue at hand they can contemplate a lot of qualitative data, such as results from interviews, surveys, reported happiness, etc.

Even when you're going to rely mostly on qualitative metrics, it's paramount to define them in advance so that there is little space for confirmation bias when the time comes to decide whether or not the initiative succeeded.

Speaking of time, time-boxing is crucial to avoid such a project from dragging on indefinitely in the hope that by spending just a couple of more weeks or months the problem will finally be solved.

The time interval you select is highly dependent on the specific initiative, size of the team, level of uncertainty, etc.

You want to set it long enough to give the team enough breathing room to explore and discard a few approaches in the search for the ideal one, but short enough to ensure the focus will primarily be on validating the riskiest and most fundamental assumptions.

Keep in mind that the longer the time box, the higher the risk that the project will have to be canceled mid-way due to the need to refocus business priorities. The risk of falling victim to the sunk-cost fallacy also increases proportionately to the amount of time - i.e. investment - you have put into it.

One approach you can take with particularly complex initiatives is to time box in incremental steps. Make sure you start by focusing on the hardest problem first, as this will likely be the main determinant of success or failure for the whole operation. And then allow for follow-up time-boxed milestones if and only if the previous one has been completed successfully.

At this point, if you're part of the team that has gotten the chance to go out and have fun solving a hard problem, you might think you can get into a cave for the next 6 months and not be disturbed by anyone external to the team… not so fast!

Let's see why in the next section.

✅ Step 4: Implement and follow up frequently

I'm a big proponent of protecting deep work and avoiding unnecessary or disruptive meetings. At the same time, it’s crucial to have frequent and regular touchpoints when running projects with a high degree of uncertainty.

You want to avoid frequent context switches which are often caused by an excess of ad-hoc unscheduled messages going back and forth.

Once a team has started working on a prototype for an important and non-trivial technical evolution, you will need to have frequent touchpoints scheduled in a way that minimizes disruption. They should be scheduled close to natural breaking points: the beginning or end of the week, and the beginning or end of work segments - mornings and afternoons.

Things tend to be more complicated if your team is distributed across multiple time zones, but that should not become an excuse for haphazardly scheduling meetings.

The agenda for those meetings should include the following topics:

Did we deliver what we said we did last time we met? i.e., is the team progressing according to the plan? If not, what happened that caused the deviation?

What have we learned since we last met? How does that change our confidence in the experiment succeeding at the end of the time-box period?

Do we have enough evidence to stop the experiment immediately and pursue a different approach?

Is there any blocker or external dependency that the team needs some help dealing with?

These touch points are required. to ensure the project is progressing as expected and that the plan can be adjusted as more evidence arises.

The secondary goal is to avoid the team drifting into over-engineering or side quests by keeping its focus on the stated objectives and providing a steady drumbeat to help the team pace its execution.

These check-ins should take place regularly until you either decide to abort the experiment or until you reach the end of the allotted time.

🤔 Step 5: Assess the result of the experiment, and define the next steps

This is the stage where it becomes apparent if you've been following a good process. If you've done a good job until now in framing the problem, expectations, and time investment, making a decision at this point should be relatively easy.

Conversely, if you and your team usually engage in heated debates at this stage, that might be a sign that you've left too much space for personal interpretation of the desired outcomes. This is when you see a lot of post-factum metrics being pulled up to prove that the proof of concept implementation had some positive impact. But going back to what we discussed about Local Optimisations, that doesn't necessarily mean it will have a positive impact on the company as a whole.

Seriously, suppose you've done a good job up to now and you've hit the end of the time-boxed experiment. In that case, you should be able to have a quick meeting to review the results, ask the relevant questions to make sure measurements have been performed according to the agreed-upon criteria, and then declare the experiment either satisfactory or not.

If the experiment has not met the required expectations, you will likely go back to Step 2 and figure out alternative approaches on how to solve the problem. After all, this is a serious problem and you don't want to stop at the first attempt.

If the experiment has succeeded, the next step will require you to plan how to go from there to full implementation.

📆 Step 6: Plan the required time to migrate to the new solution

Unless you designed your experiment to be way too broad and detailed, the work is not finished here. At this point, you have validated that a certain approach can work as a solution. From there to making it production-ready and fully adopted across your team it might be a long journey, often way longer than what it took to get to where you are now.

Here is also where the work required switches from exciting discovery and experimentation, to more routine and for some people less interesting work of polishing the solution, rolling it out, decommissioning old solutions, etc.

As the old saying goes, what god you here won't take you where. In this new phase, you're not trying to move as fast as possible to validate or discard the hypothesis. You are building a piece of technology that will be part of the stack for many years.

It is now paramount to invest in making the solution robust with a good level of test coverage, documentation, etc.

Depending on your team’s composition you might face resistance at this stage, with some advocating that the problem is solved and that time would be better spent moving on to the next exciting project.

Though you should never aim for perfection, I recommend you handle these tensions carefully, lest you end up with a pile of unfinished pieces of your stack that will cause all sorts of funny side effects down the road.

I've seen new technology become marked legacy a couple of years after being introduced because of an unfinished implementation.

😰 Common complications

The process I've described will work in most cases, but reality is often way more complicated than the ideal case.

The most common complications I've encountered fall into either one of these two categories:

A new promising piece of technology that we believe will be a game changer, but we don't know how just yet. GenAI is the most salient example these days.

A team who isn't happy with a solution provided by another team, and sets off building a competing alternative rather than collaborating to improve the existing one.

As these are not the most common cases, they require more bespoke solutions.

When dealing with them, I recommend you follow a couple of principles:

First, when experimenting with new technology to discover what you can do with it, frame it as an investment in learning. It can take many forms: a spike, a hackathon, a small R&D team, etc. Just make sure this doesn't become the default approach to avoid the need to go through a structured process.

Secondly, no process or technical innovation will solve profound cultural and trust issues existing in your organization. When you're dealing with teams competing against each other because they know better, you should probably spend the time to identify the root causes of that and work with the relevant leaders to (re)establish an environment of trust and collaboration.

Once that is in place, you can design a process around healthy competition across teams, setting clear rules and responsibilities when it comes to maintaining and evolving new solutions that have been introduced.

🏁 Conclusions

Today's article closes a two-part series on establishing processes to guide technical innovation in your team.

We looked at a process you can implement revolving around a handful of key steps.

As usual in these cases, the worst thing you can do is to merely copy-paste what you read here and hope it will work flawlessly for your specific case.

Take these as guidelines, and then build your own process.

If you do so or have done it already, I'd love to hear about your experience and thoughts in the comments section.

Insightful read, Sergio! being part of a team working on innovation sure is changing but it’s worth it. I can definitely resonate with the advice for avoiding confirmation bias and sunk cost fallacy. I believe it is important to u deeply understand the context and prioritization towards the higher leverage initiatives. Then it should be split into something smaller, a proof of concept, focus on the real challenge first leaving the details for the real implementation.